Calculating the Total Cost of a GPU Cluster

GPU-hour pricing is the most visible metric in AI infrastructure — and often the most misleading. Two providers may advertise similar rates, yet deliver very different total costs once utilization, reliability, and operational dynamics are factored in production scale.

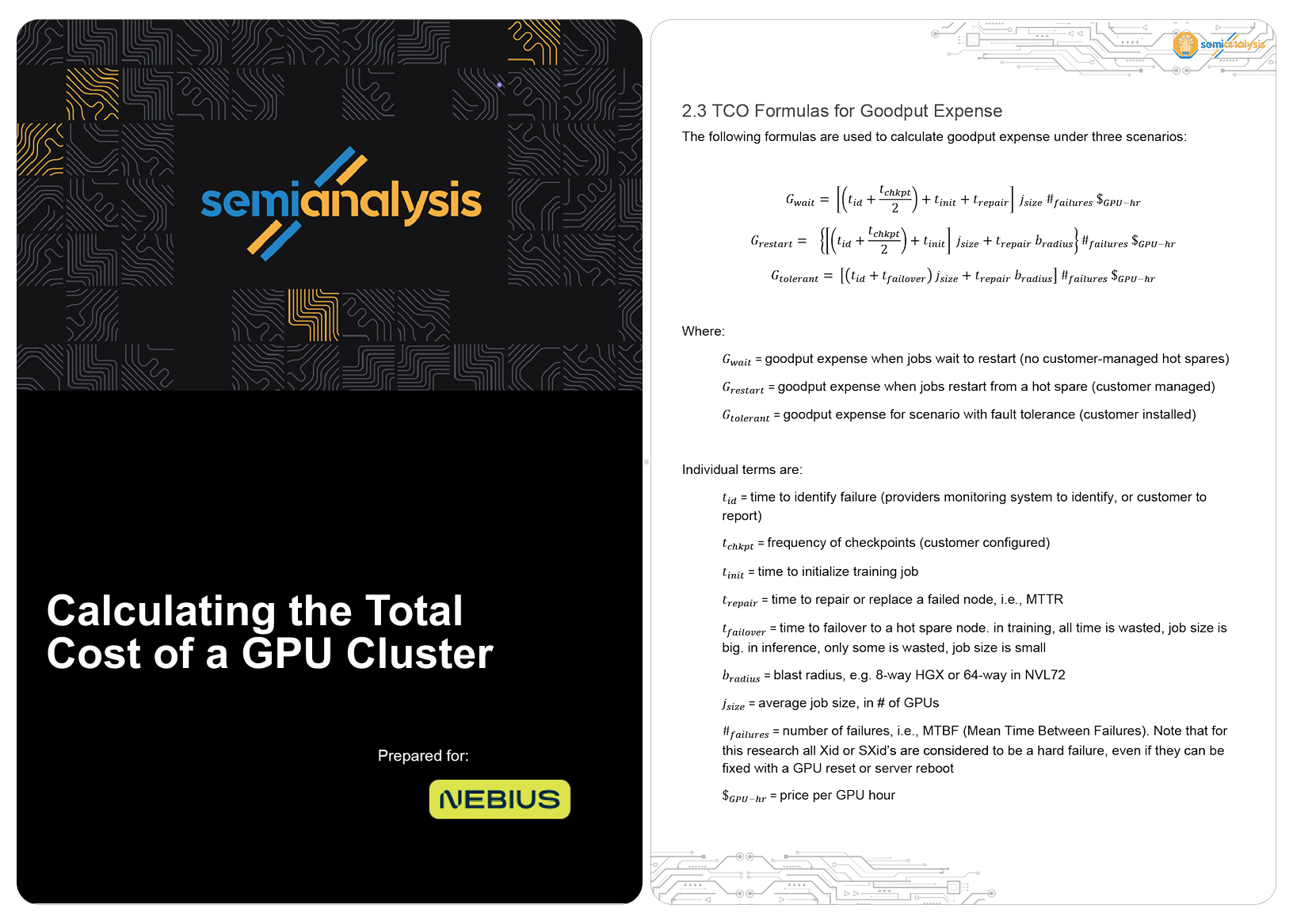

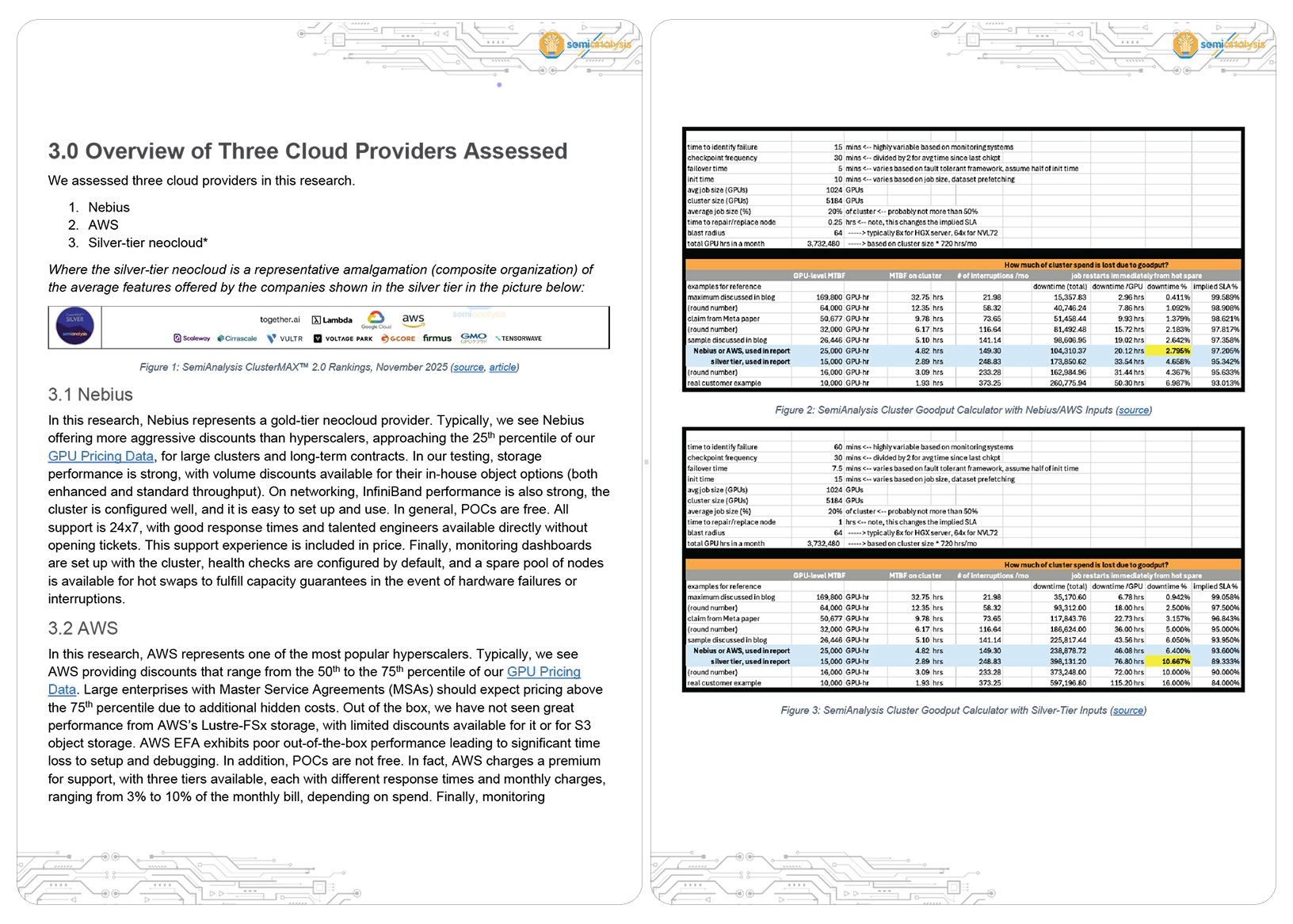

To get to the bottom of the true cost of a cluster and quantify these hidden costs, Nebius commissioned SemiAnalysis to model three real-world workloads — large LLM pre-training, multimodal RL research, and production inference — and calculate total cost of ownership (TCO) across different infrastructure providers.

Across all three scenarios, Nebius delivered the lowest TCO.

-

AWS was 9% more expensive on a TCO-adjusted basis when GPU-hour pricing was held constant, and up to 113% more expensive when using SemiAnalysis real-world pricing data

-

Silver-tier providers * were 4%-8% more expensive on a TCO-adjusted basis when GPU-hour pricing was held constant

Inside the report:

-

How infrastructure reliability and cluster design shape the true cost per completed model

-

How downtime, recovery time, and wasted compute quietly compound at scale

-

Why networking performance and operational maturity determine real-world cluster productivity

-

Why Nebius can deliver lower overall TCO, even when headline GPU pricing appears comparable

* “Silver-tier providers” refers to a representative composite of the average capabilities offered by providers included in the SemiAnalysis ClusterMax ranking.